In this guide, we will look at the key differences between CPU and GPU.

Table of Contents

What are CPU and GPU?

CPU stands for Central Processing Unit and GPU stands for Graphics Processing Unit. Two chips that power almost everything in modern computing.

But yet they are used or architectured for fundamentally different purposes.

Let’s have a look on each one of them.

CPU

CPU often referred to as the central brain of the computer. The CPU is a general purpose processor that is designed to manage the entire system and coordinate the flow of data between the hardware and the software.

The architecture of the CPU is latency oriented, means that it is designed to complete individual tasks with minimal delay.

To achieve this, it uses a small number of highly powerful features called cores. From the range of 4 to 64.

It uses serial or sequential processing mode, which means that it executes processes step by step

Key Components: Both CPU and GPU can calculate, millions of calculations every second and use internal memory to improve processing performance.

But, in CPU, the labels are indicated as L1,L2 or L3, This can be called as Cache Arrangement. This generally means like, L1 is the fastest , and L3 is the slowest.

GPU

The overall global GPU market is projected to reach around $100B+ in 2026 and could grow beyond $640B by 2034 with AI being the biggest driver.

GPU generally made for rendering 3D graphics, video games. But GPU has evolved that it became indispensable for AI research and development.

The architecture of GPU is built to maximize the total number of tasks completed simultaneously rather than the speed of a single task.

It also uses small components called cores , but differs in numbers ranging from hundreds to thousands of smaller, simpler cores . Some of the modern chips may exceed 18000 cores.

GPU uses Parallel execution, breaking large and complex problems into multiple subtasks that are processed at the same time.

Some of the modern GPUs include, Tensor Cores, which are specially designed to accelerate matrix multiply-accumulate (MMA) operations used in deep learning.

Feature | CPU | GPU |

|---|---|---|

Optimization | Latency (speed of a single task) | Throughput (volume of tasks) |

Core Type | Few, powerful, complex cores | Thousands of small, simple cores |

Execution | Sequential/Serial | Parallel |

Ideal Workload | OS tasks, complex logic, branching | Math, 3D rendering, AI training |

Now, lets dive into our core concept:

Why ML needs GPU?

Machine Learning, especially deep learning, involves large amounts of mathematical computations during model training.

These computations are largely based on linear algebra operations such as:

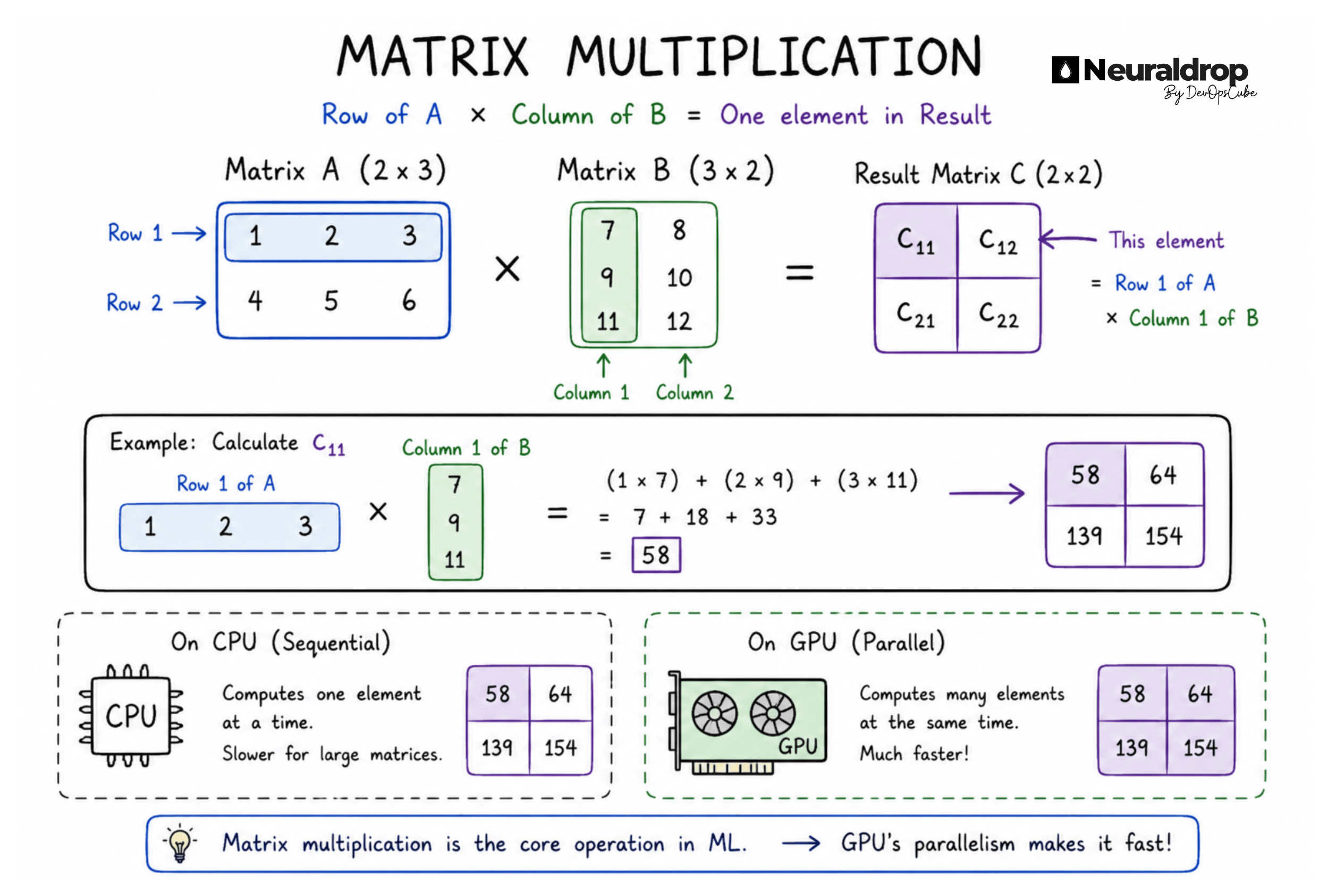

Matrix multiplication

Vector operations

Tensor transformations

Since these operations can be performed on many data points simultaneously, they are highly parallelizable.

For example:

A CPU may process matrix operations step by step

A GPU can process thousands of matrix calculations simultaneously

SIMT (Single Instruction, Multiple Threads)

SIMT: GPU uses a Single Instruction Multiple Threads ( a thread is the smallest unit of execution inside a program) execution model, where a single instruction is dispatched to a group of 32 or more threads simultaneously

In simple terms: Instead of telling each thread what to do separately,

the GPU gives one instruction to a group of threads ( 32 threads or more ), and they all execute it at the same time.

Why this matters for ML:

Faster model training

Efficient handling of large datasets

Better performance for deep neural networks

Significant reduction in training cost and time

Why specific (Enterprise) GPUs over Gaming GPUs?

Now you might now ask.

“ I would rather buy a Gaming GPU, Why should I go for specific GPU for ML training ?”

While, it is possible to run small ML models on gaming laptop, But there are specialized enterprise level GPUs that are required for professional and large scale AI because of some of the technical reasons:

VRAM: Modern Large Language Models (LLM), have massive memory footprints. For example: a 70 billion parameter model requires 140 GB of memory just to load.

While gaming GPUs come with 8 to 24 GigaBytes of VRAM, Flagship Enterprise GPUs offer up to 192 GB HBM3e Memory. which allows large models to fit and run on a single chip.

Memory Bandwidth: Machine Learning is often limited by how fast data can be fed. Here Enterprise GPUs use High Bandwidth Memory (HBM), can reach speeds of 3.35 TB/s (H100) to 4.8 TB/s (H200), compared to 1 TB/s on gaming GPUs, because of the lower bandwidth of the GDDR memory used in gaming cards.

Interconnect Speed (NVLink): Professional ML often requires clustering (that means, when we train very large machine learning models one GPU will not be enough so we need to use multiple GPUs)

Technologies like NVLink allow enterprise GPUs to communicate with each other much faster than the standard PCIe bus used in gaming systems.

NVLink is a high speed connection technology by NVIDIA that lets GPUs communicate with each other much faster than the traditional methods.

Peripheral Component Interconnect Express (PCIe) is a high speed computer expansion bus standard used to connect hardware devices such as graphics cards, SSDs, and network adapters to a motherboard.

Biggest GPU Market Segments

This is where most money is flowing. Companies are buying GPUs for the following.

LLM training

AI inference

Agentic AI

RAG systems

Video AI

Robotics

Scientific computing

NVIDIA alone generated over $62B quarterly data center revenue recently.

Conclusion

Machine learning isn’t just about smarter models. It is about faster computation.

From matrix multiplication to parallel threads and high speed GPU communication, the real power of AI comes from doing more work at the same time.

Behind every breakthrough model is hardware designed for parallelism, turning massive mathematical workloads into practical solutions.

As models grow larger and more complex, the ability to scale computation efficiently becomes just as important as the algorithms. In the end, progress in AI is not just driven by ideas but by how fast we can execute them.