👋 Hey there, welcome back.

In today’s edition, we will look into

TurboQuant algorithm by Google

What problem it solves, and Why it’s a big deal and more.

What is TurboQuant Algorithm?

TurboQuant is the latest algorithm launched by Google Research in March 2026

It is a compression algorithm developed by Google Research that can reduce the memory footprint of large AI models by at least 6x with zero accuracy loss and no retraining required

This makes AI faster, cheaper, and smarter without changing anything about the AI model itself. It is purely a compression trick applied to how AI stores its working memory

Simple Analogy :

Think of it like converting a huge, uncompressed WAV audio file into a tiny MP3 file except, the compressed MP3 file is nearly indistinguishable from the original WAV file in terms of quality

How does TurboQuant achieve this?

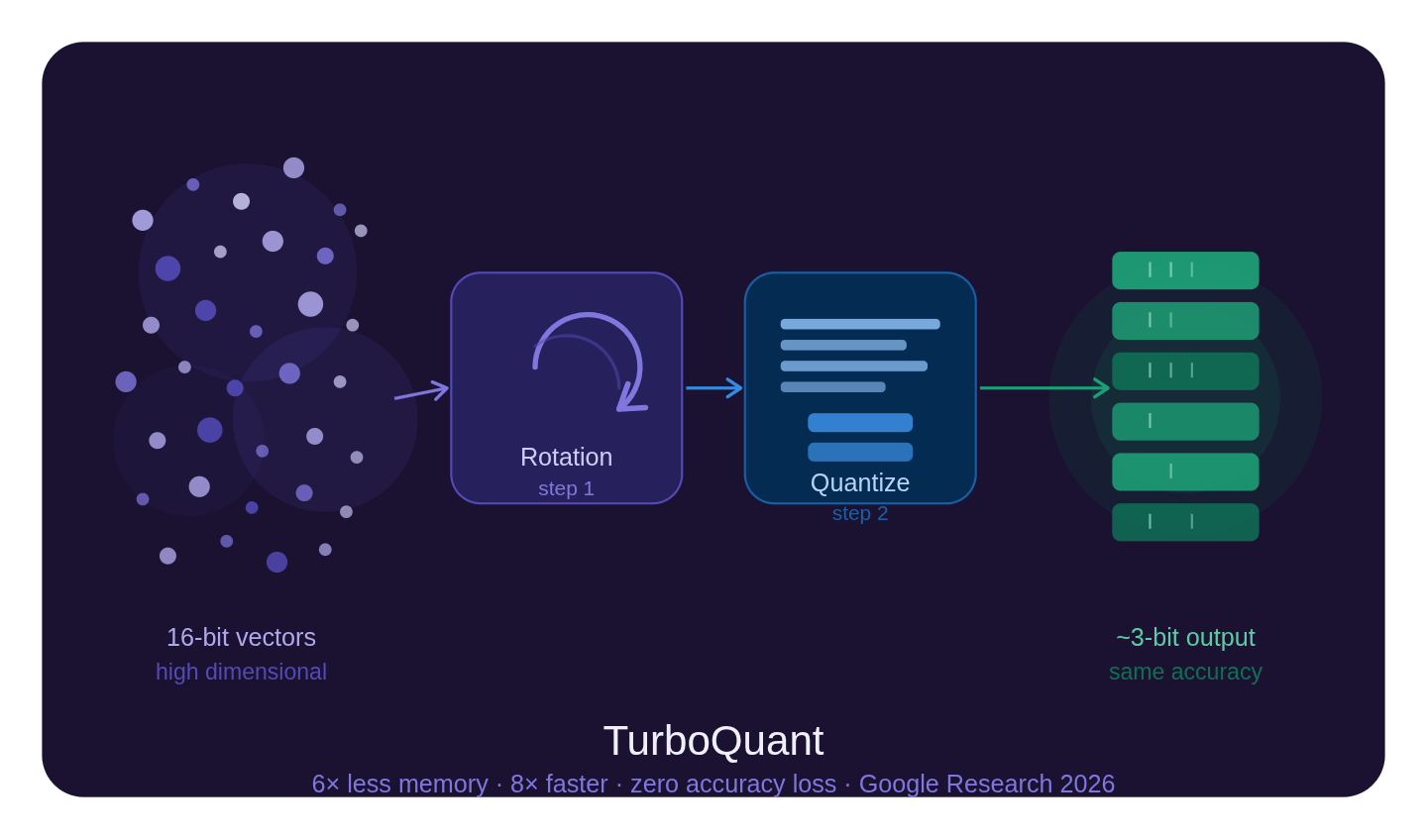

TurboQuant Algorithm mainly uses two core steps :

Random Rotation - Before compression TurboQuant applies a random mathematical rotation to the data vectors. In this step the goal is to make all the data spread out evenly and behave in uniform and in a predictable pattern

Scalar Quantization - After rotation, TurboQuant compresses each value individually from high-precision 16 bit down to just 3 bits Because the rotation made the data uniform, this compression method is done with simple, well-known math technique called Max-Lloyd scalar quantization and the result is nearly indistinguishable from the original

The problem it solves

When AI like Gemini, ChatGPT is having conversation with us, It needs to remember everything we said in the chat to give accurate and relevant responses.

This memory is stored in something called KV Cache (Key-Value Cache). Think of it as the AI’s notepad during the conversation.

During the process, large language models LLMs , the KV cache acts as the temporary notebook where every round of dialogue and segment of input text is converted into high-dimensional vectors.

Stored temporarily to provide context for subsequent inference steps, This becomes extremely large and expensive to maintain.

How TurboQuant fixes it ?

TurboQuant is a quantization algorithm, a method that compresses the numerical data from high precision format (like 32-bit or 16-bit floating points) down to a much smaller format, such as 3 or 4 bits.

Simple example :

Old way: Storing the number

3.14159265(takes a lot of space 32 bits)TurboQuant way: Storing just

3.1(stored in just 3 bits) almost the same, but 10x smaller

It applies a random rotation to input vectors, which makes all the data coordinates behave uniformly and predictably regardless of what the original data looked like. This simplifies compression dramatically

It delivers up to 8x faster inference and a 6x reduction in memory consumption on H100 GPUs

6x less memory

8x faster

Zero accuracy loss

No retraining needed

Why it is a Big deal ?

This fundamentally changes the economics of AI

TurboQuant is a software-only breakthrough a training-free solution to reduce model size without sacrificing intelligence that could reduce costs for enterprises by more than 50%.

If Google adopts this in Search, AI Overviews could pull from a broader, more precise set of sources, making it easier to generate instant summaries from large data pools

It even caused Micron and SK Hynix (memory chipset makers) to see their stock prices drop sharply because if AI needs less memory, demand for expensive memory chips drops!

TurboQuant isn’t just an optimization, it’s a shift in how efficiently AI can scale.

Here is the official document, check it out.